Bigger isn’t always better: How hybrid AI pattern enables smaller language models

As large language models (LLMs) have entered the common vernacular, people have discovered how to use apps that access them. Modern AI tools can generate, create, summarize, translate, classify and even converse. Tools in the generative AI domain allow us to generate responses to prompts after learning from existing artifacts.

One area that has not seen much innovation is at the far edge and on constrained devices. We see some versions of AI apps running locally on mobile devices with embedded language translation features, but we haven’t reached the point where LLMs generate value outside of cloud providers.

However, there are smaller models that have the potential to innovate gen AI capabilities on mobile devices. Let’s examine these solutions from the perspective of a hybrid AI model.

The basics of LLMs

LLMs are a special class of AI models powering this new paradigm. Natural language processing (NLP) enables this capability. To train LLMs, developers use massive amounts of data from various sources, including the internet. The billions of parameters processed make them so large.

While LLMs are knowledgeable about a wide range of topics, they are limited solely to the data on which they were trained. This means they are not always “current” or accurate. Because of their size, LLMs are typically hosted in the cloud, which require beefy hardware deployments with lots of GPUs.

This means that enterprises looking to mine information from their private or proprietary business data cannot use LLMs out of the box. To answer specific questions, generate summaries or create briefs, they must include their data with public LLMs or create their own models. The way to append one’s own data to the LLM is known as retrieval augmentation generation, or the RAG pattern. It is a gen AI design pattern that adds external data to the LLM.

Is smaller better?

Enterprises that operate in specialized domains, like telcos or healthcare or oil and gas companies, have a laser focus. While they can and do benefit from typical gen AI scenarios and use cases, they would be better served with smaller models.

In the case of telcos, for example, some of the common use cases are AI assistants in contact centers, personalized offers in service delivery and AI-powered chatbots for enhanced customer experience. Use cases that help telcos improve the performance of their network, increase spectral efficiency in 5G networks or help them determine specific bottlenecks in their network are best served by the enterprise’s own data (as opposed to a public LLM).

That brings us to the notion that smaller is better. There are now Small Language Models (SLMs) that are “smaller” in size compared to LLMs. SLMs are trained on 10s of billions of parameters, while LLMs are trained on 100s of billions of parameters. More importantly, SLMs are trained on data pertaining to a specific domain. They might not have broad contextual information, but they perform very well in their chosen domain.

Because of their smaller size, these models can be hosted in an enterprise’s data center instead of the cloud. SLMs might even run on a single GPU chip at scale, saving thousands of dollars in annual computing costs. However, the delineation between what can only be run in a cloud or in an enterprise data center becomes less clear with advancements in chip design.

Whether it is because of cost, data privacy or data sovereignty, enterprises might want to run these SLMs in their data centers. Most enterprises do not like sending their data to the cloud. Another key reason is performance. Gen AI at the edge performs the computation and inferencing as close to the data as possible, making it faster and more secure than through a cloud provider.

It is worth noting that SLMs require less computational power and are ideal for deployment in resource-constrained environments and even on mobile devices.

An on-premises example might be an IBM Cloud® Satellite location, which has a secure high-speed connection to IBM Cloud hosting the LLMs. Telcos could host these SLMs at their base stations and offer this option to their clients as well. It is all a matter of optimizing the use of GPUs, as the distance that data must travel is decreased, resulting in improved bandwidth.

How small can you go?

Back to the original question of being able to run these models on a mobile device. The mobile device might be a high-end phone, an automobile or even a robot. Device manufacturers have discovered that significant bandwidth is required to run LLMs. Tiny LLMs are smaller-size models that can be run locally on mobile phones and medical devices.

Developers use techniques like low-rank adaptation to create these models. They enable users to fine-tune the models to unique requirements while keeping the number of trainable parameters relatively low. In fact, there is even a TinyLlama project on GitHub.

Chip manufacturers are developing chips that can run a trimmed down version of LLMs through image diffusion and knowledge distillation. System-on-chip (SOC) and neuro-processing units (NPUs) assist edge devices in running gen AI tasks.

While some of these concepts are not yet in production, solution architects should consider what is possible today. SLMs working and collaborating with LLMs may be a viable solution. Enterprises can decide to use existing smaller specialized AI models for their industry or create their own to provide a personalized customer experience.

Is hybrid AI the answer?

While running SLMs on-premises seems practical and tiny LLMs on mobile edge devices are enticing, what if the model requires a larger corpus of data to respond to some prompts?

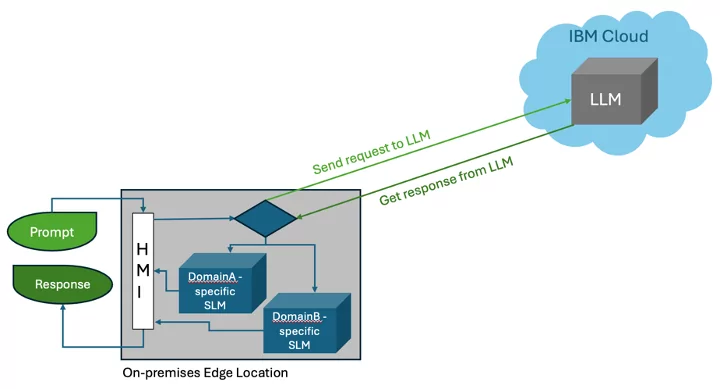

Hybrid cloud computing offers the best of both worlds. Might the same be applied to AI models? The image below shows this concept.

When smaller models fall short, the hybrid AI model could provide the option to access LLM in the public cloud. It makes sense to enable such technology. This would allow enterprises to keep their data secure within their premises by using domain-specific SLMs, and they could access LLMs in the public cloud when needed. As mobile devices with SOC become more capable, this seems like a more efficient way to distribute generative AI workloads.

IBM® recently announced the availability of the open source Mistral AI Model on their watson™ platform. This compact LLM requires less resources to run, but it is just as effective and has better performance compared to traditional LLMs. IBM also released a Granite 7B model as part of its highly curated, trustworthy family of foundation models.

It is our contention that enterprises should focus on building small, domain-specific models with internal enterprise data to differentiate their core competency and use insights from their data (rather than venturing to build their own generic LLMs, which they can easily access from multiple providers).

Bigger is not always better

Telcos are a prime example of an enterprise that would benefit from adopting this hybrid AI model. They have a unique role, as they can be both consumers and providers. Similar scenarios may be applicable to healthcare, oil rigs, logistics companies and other industries. Are the telcos prepared to make good use of gen AI? We know they have a lot of data, but do they have a time-series model that fits the data?

When it comes to AI models, IBM has a multimodel strategy to accommodate each unique use case. Bigger is not always better, as specialized models outperform general-purpose models with lower infrastructure requirements.

Create nimble, domain-specific language models

Learn more about generative AI with IBM

Was this article helpful?

YesNo