SIBYL: A machine learning-based framework for forecasting dynamic workloads

This paper was presented at the ACM SIGMOD/Principles of Database Systems Conference (opens in new tab) (SIGMOD/PODS 2024), the premier forum on large-scale data management and databases.

In today’s fast-paced digital landscape, data analysts are increasingly dependent on analytics dashboards to monitor customer engagement and app performance. However, as data volumes increase, these dashboards can slow down, leading to delays and inefficiencies. One solution is to use software designed to optimize how data is physically stored and retrieved, but the challenge remains in anticipating the specific queries analysts will run, a task complicated by the dynamic nature of modern workloads.

In our paper, “SIBYL: Forecasting Time-Evolving Query Workloads,” presented at SIGMOD/PODS 2024, we introduce a machine learning-based framework designed to accurately predict queries in dynamic environments. This innovation allows traditional optimization tools, typically meant for static settings, to seamlessly adapt to changing workloads, ensuring consistent high performance as query demands evolve.

Ideas: Exploring AI frontiers with Rafah Hosn

Energized by disruption, partner group product manager Rafah Hosn is helping to drive scientific advancement in AI for Microsoft. She talks about the mindset needed to work at the frontiers of AI and how the research-to-product pipeline is changing in the GenAI era.

SIBYL’s design and features

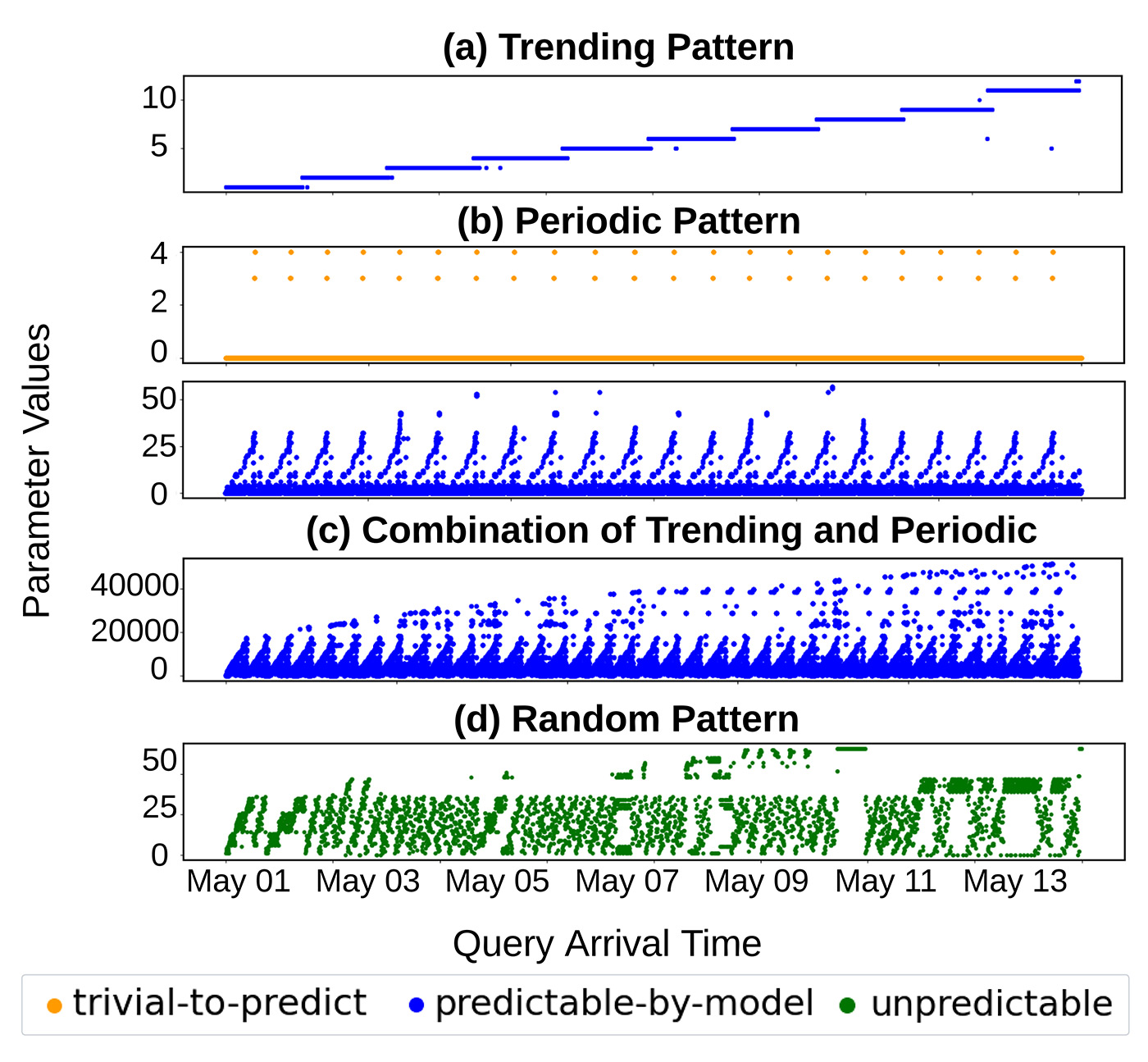

SIBYL’s framework is informed by studies of real-world workloads, which show that most are dynamic but follow predicable patterns. We identified the following recurring patterns in how parameters change over time:

- Trending: Queries that increase, decrease, or remain steady over time.

- Periodic: Queries that occur at regular intervals, such as hourly or daily.

- Combination: A mix of trending and periodic patterns.

- Random: Queries with unpredictable patterns.

These insights, illustrated in Figure 1, form the basis of SIBYL’s ability to forecast query workloads, enabling databases to maintain peak efficiency even as usage patterns shift.

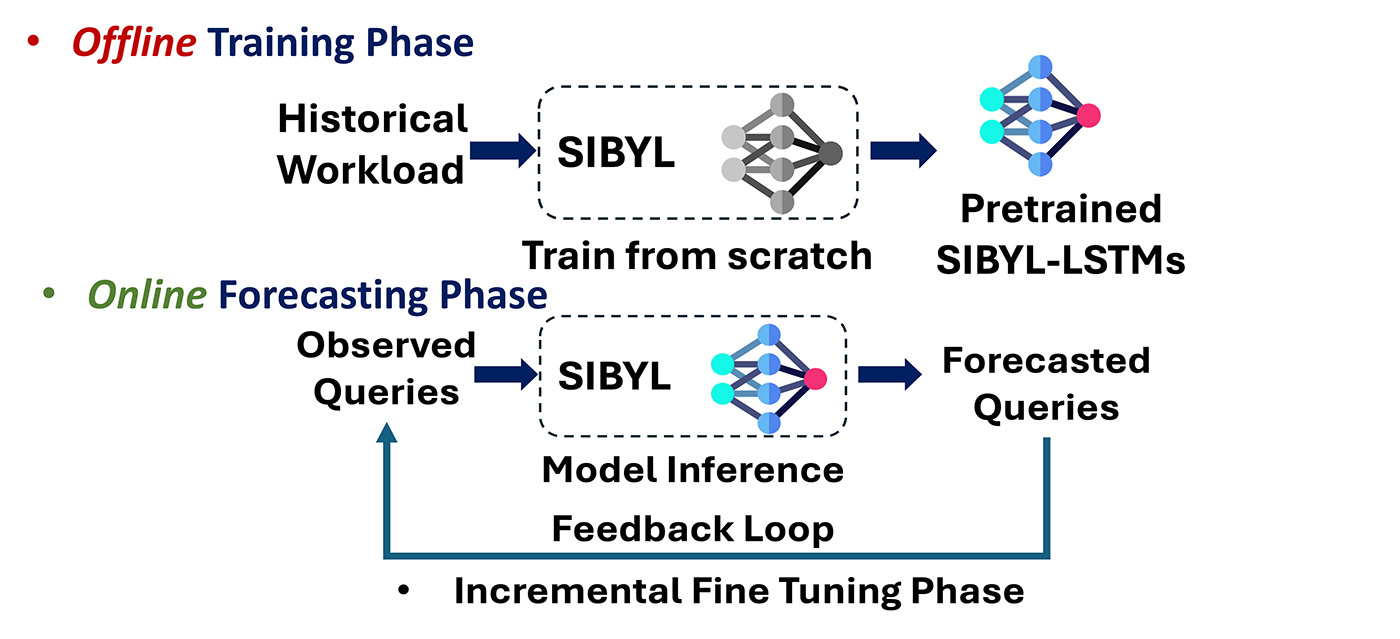

SIBYL uses machine learning to analyze historical data and parameters to predict queries and arrival times. SIBYL’s architecture, illustrated in Figure 2, operates in three phases:

- Training: It uses historical query logs and arrival times to build machine learning models.

- Forecasting: It employs pretrained models to predict future queries and their timing.

- Incremental fine-tuning: It continuously adapts to new workload patterns through an efficient feedback loop.

Challenges and innovations in designing a forecasting framework

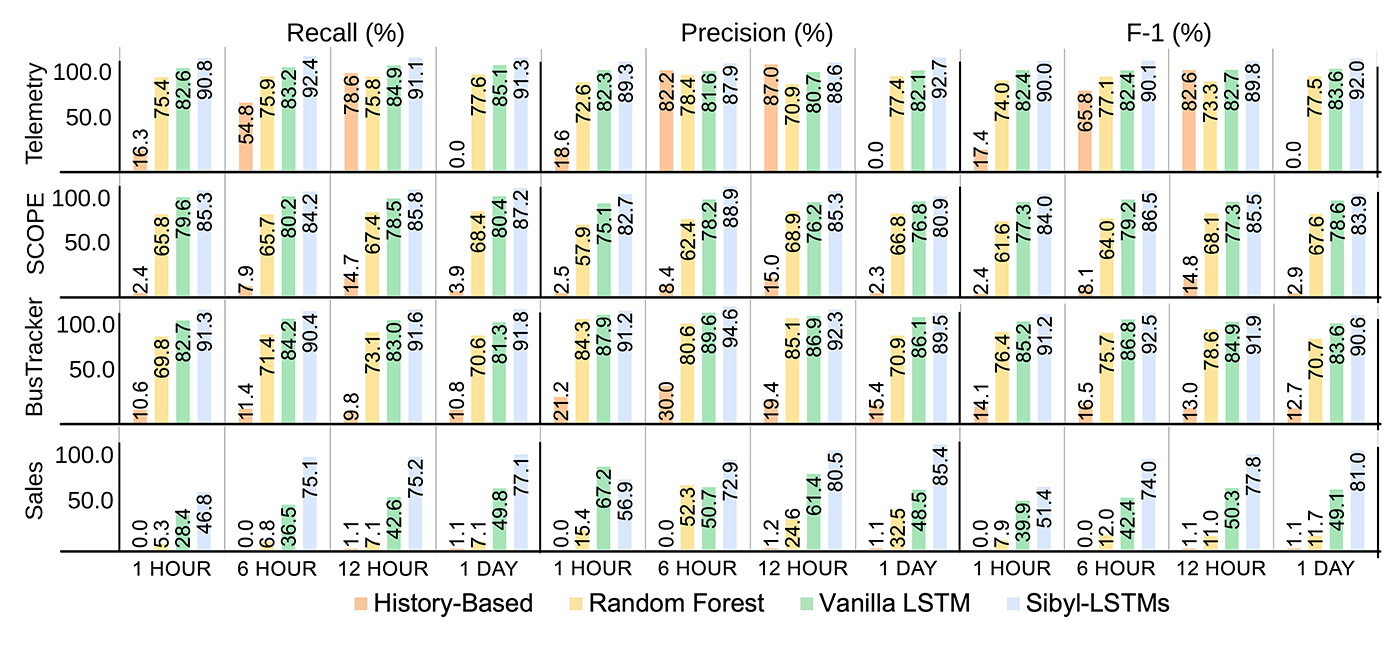

Designing an effective forecasting framework is challenging, particularly in managing the varying number of queries and the complexity of creating separate models for each type of query. SIBYL addresses these by grouping high-volume queries and clustering low-volume ones, supporting scalability and efficiency. As demonstrated in Figure 3, SIBYL consistently outperforms other forecasting models, maintaining accuracy over different time intervals and proving its effectiveness in dynamic workloads.

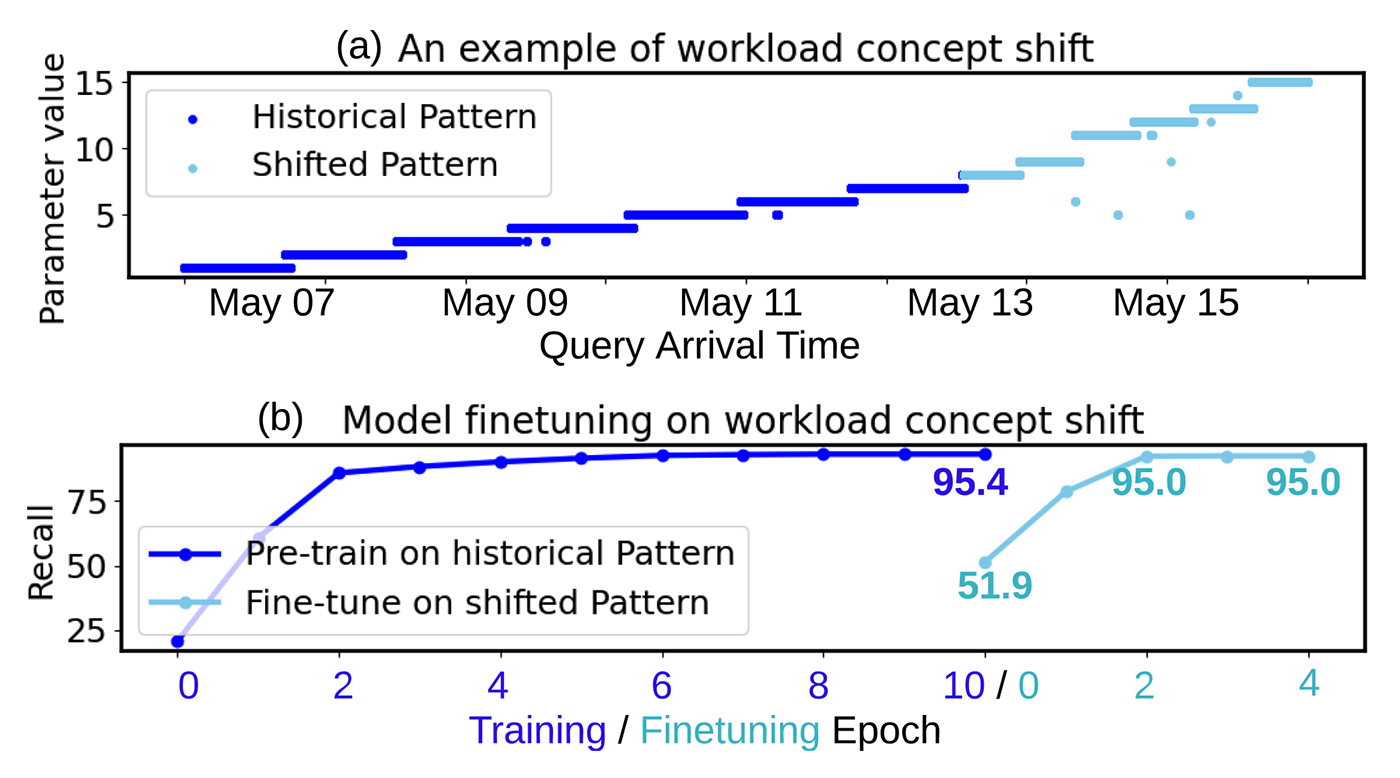

SIBYL adapts to changes in workload patterns by continuously learning, retaining high accuracy with minimal adjustments. As shown in Figure 4, the model reaches 95% accuracy after fine-tuning in just 6.4 seconds, nearly matching its initial accuracy of 95.4%.

To address slow dashboard performance, we tested SIBYL by using it to create materialized views—special data structures that make queries run faster. These views identify common tasks and recommend which ones to store in advance, expediting future queries.

We trained SIBYL using 2,237 queries from anonymized Microsoft sales data over 20 days, enabling us to create materialized views for the following day. Using historical data improved query performance 1.06 times, while SIBYL’s predictions achieved a 1.83-time increase. This demonstrates that SIBYL’s ability to forecast future workloads can significantly improve database performance.

Implications and looking ahead

SIBYL’s ability to predict dynamic workloads has numerous applications beyond improving materialized views. It can help organizations efficiently scale resources, leading to reduced costs. It can also improve query performance by automatically organizing data, ensuring that the most frequently accessed data is always available. Moving forward, we plan to integrate more machine learning techniques, making SIBYL even more efficient, reducing the effort needed for setup, and improving how databases handle dynamic workloads, making them faster and more reliable.

Acknowledgments

We would like to thank our paper co-authors for their valuable contributions and efforts: Jyoti Leeka, Alekh Jindal, and Jishen Zhao.