AI education: CA teachers use AI to grade papers

In summary

California schools are using more chatbots, and teachers are using them to grade papers and give students feedback.

Your children could be some of a growing number of California kids having their writing graded by software instead of a teacher.

California school districts are signing more contracts for artificial intelligence tools, from automated grading in San Diego to chatbots in central California, Los Angeles, and the San Francisco Bay Area.

English teachers say AI tools can help them grade papers faster, get students more feedback, and improve their learning experience. But guidelines are vague and adoption by teachers and districts is spotty.

The California Department of Education can’t tell you which schools use AI or how much they pay for it. The state doesn’t track AI use by school districts, said Katherine Goyette, computer science coordinator for the California Department of Education.

While Goyette said chatbots are the most common form of AI she’s encountered in schools, more and more California teachers are using AI tools to help grade student work. That’s consistent with surveys that have found teachers use AI as often if not more than students, news that contrasts sharply with headlines about fears of students cheating with AI.

Teachers use AI to do things like personalize reading material, create lesson plans, and other tasks in order to save time and and reduce burnout. A report issued last fall in response to an AI executive order by Gov. Gavin Newsom mentions opportunities to use AI for tutoring, summarization, and personalized content generation, but also labels education a risky use case. Generative AI tools have been known to create convincing but inaccurate answers to questions, and use toxic language or imagery laden with racism or sexism.

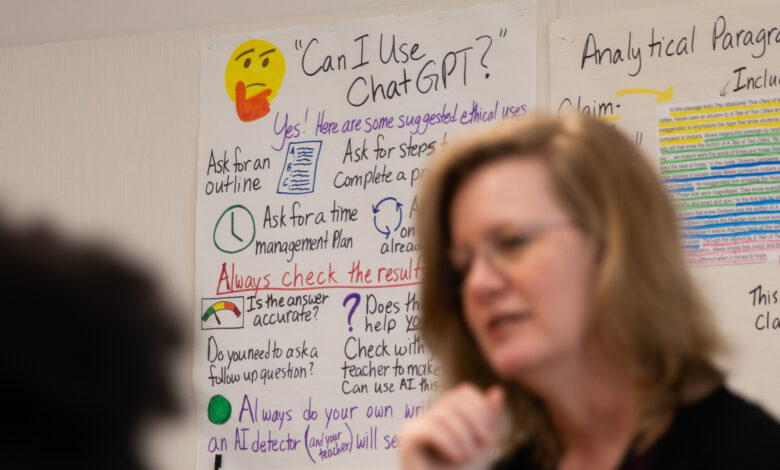

California issued guidance for how educators should use the technology last fall, one of seven states to do so. It encourages critical analysis of text and imagery created by AI models and conversations between teachers and students about what amounts to ethical or appropriate use of AI in the classroom.

But no specific mention is made of how teachers should treat AI that grades assignments. Additionally, the California education code states that guidance from the state is “merely exemplary, and that compliance with the guidelines is not mandatory.”

Goyette said she’s waiting to see if the California Legislature passes Senate Bill 1288, which would require state Superintendent Tony Thurmond to create an AI working group to issue further guidance to local school districts on how to safely use AI. Cosponsored by Thurmond, the bill also calls for an assessment of the current state of AI in education and for the identification of forms of AI that can harm students and educators by 2026.

Nobody tracks what AI tools school districts are adopting or the policy they use to enforce standards, said Alix Gallagher, head of strategic partnerships at the Policy Analysis for California Education center at Stanford University. Since the state does not track curriculum that school districts adopt or software in use, it would be highly unusual for them to track AI contracts, she said.

Amid AI hype, Gallagher thinks people can lose sight of the fact that the technology is just a tool and it will only be as good or problematic as the decisions of the humans using that tool, which is why she repeatedly urges investments in helping teachers understand AI tools and how to be thoughtful about their use and making space for communities are given voice about how to best meet their kid’s needs.

“Some people will probably make some pretty bad decisions that are not in the best interests of kids, and some other people might find ways to use maybe even the same tools to enrich student experiences,” she said.

Teachers use AI to grade English papers

Last summer, Jen Roberts, an English teacher at Point Loma High School in San Diego, went to a training session to learn how to use Writable, an AI tool that automates grading writing assignments and gives students feedback powered by OpenAI. For the past school year, Roberts used Writable and other AI tools in the classroom, and she said it’s been the best year yet of nearly three decades of teaching. Roberts said it has made her students better writers, not because AI did the writing for them, but because automated feedback can tell her students faster than she can how to improve, which in turn allows her to hand out more writing assignments.

“At this point last year, a lot of students were still struggling to write a paragraph, let alone an essay with evidence and claims and reasoning and explanation and elaboration and all of that,” Roberts said. “This year, they’re just getting there faster.”

Roberts feels Writable is “very accurate” when grading her students of average aptitude. But, she said, there’s a downside: It sometimes assigns high-performing students lower grades than merited and struggling students higher grades. She said she routinely checks answers when the AI grades assignments, but only checks the feedback it gives students occasionally.

“In actual practicality, I do not look at the feedback it gives every single student,” she said. “That’s just not a great use of my time. But I do a lot of spot checking and I see what’s going on and if I see a student that I’m worried about get feedback, (I’m like) ‘Let me go look at what his feedback is and then go talk to him about that.’”

Alex Rainey teaches English to fourth graders at Chico Country Day School in northern California. She used GPT-4, a language model made by OpenAI which costs $20 a month, to grade papers and provide feedback. After uploading her grading rubric and examples of her written feedback, she used AI to grade assignments about animal defense mechanisms, allowing GPT-4 to analyze students’ grammar and sentence structure while she focused on assessing creativity.

“I feel like the feedback it gave was very similar to how I grade my kids, like my brain was tapped into it,” she said.

Like Roberts she found that it saves time, transforming work that took hours into less than an hour, but also found that sometimes GPT-4 is a tougher grader than she is. She agrees that quicker feedback and the ability to dole out more writing assignments produces better writers. A teacher can assign more writing before delivering feedback but “then kids have nothing to grow from.”

Rainey said her experience grading with GPT-4 left her in agreement with Roberts, that more feedback and writing more often produces better writers. She feels strongly that teachers still need to oversee grading and feedback by AI, “but I think it’s amazing. I couldn’t go backwards now.”

The cost of using AI in the classroom

Contracts involving artificial intelligence can be lucrative.

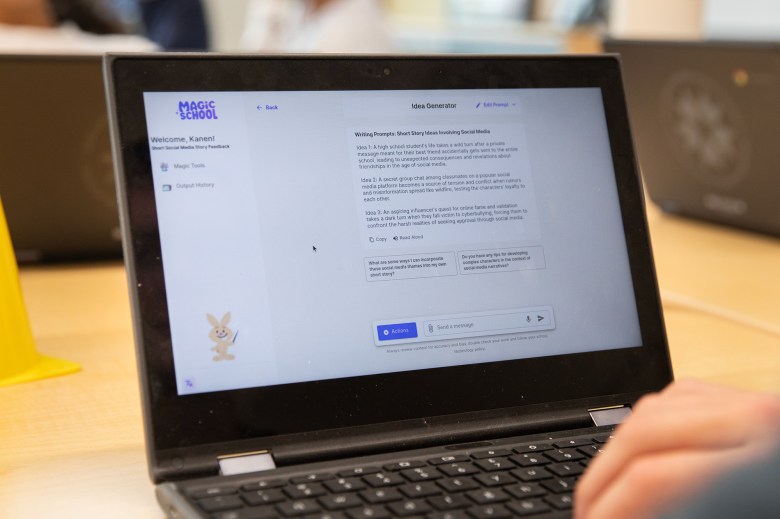

To launch a chatbot named Ed, Los Angeles Unified School District signed a $6.2 million contract for two years with the option of renewing for three additional years. Magic School AI is used by educators in Los Angeles and costs $100 per teacher per year.

Despite repeated calls and emails over the span of roughly a month, Writable and the San Diego Unified School District declined to share pricing details with CalMatters. A district spokesperson said teachers got access to Writeable through a contract with Houghton Mifflin Harcourt for English language learners.

QuillBot is an AI-powered writing tool for students in grades 4-12 made by the company Quill. Quill says its tool is currently used at 1,000 schools in California and has more than 13,000 student and educator users in San Diego alone. An annual Quill Premium subscription costs $80 per teacher or $1800 per school.

QuillBot does not generate writing for students like ChatGPT or grade writing assignments, but gives students feedback on their writing. Quill is a nonprofit that’s raised $20 million from groups like Google’s charitable foundation and the Bill and Melinda Gates Foundation over the past 10 years.

Even if a teacher or district wants to shell out for an AI tool, guidance for safe and responsible use is still getting worked out.

Governments are placing high-risk labels on forms of AI with the power to make critical decisions about whether a person gets a job or rents an apartment or receives government benefits. California Federation of Teachers President Jeff Freitas said he hasn’t considered whether AI for grading is moderate or high risk, but “it definitely is a risk to use for grading.”

The California Federation of Teachers is a union with 120,000 members. Freitas told CalMatters he’s concerned about AI having a number of consequences in the classroom. He’s worried administrators may use it to justify increasing classroom sizes or adding to teacher workloads; he’s worried about climate change and the amount of energy needed to train and deploy AI models’ he’s worried about protecting students’ privacy, and he’s worried about automation bias.

Regulators around the world wrestling with AI praise approaches where it is used to augment human decisionmaking instead of replacing it. But it’s difficult for laws to account for automation bias and humans becoming placing too much trust in machines.

The American Federation of Teachers created an AI working group in October 2023 to propose guidance on how educators should use the technology or talk about it in collective bargaining contract negotiations. Freitas said those guidelines are due out in the coming weeks.

“We’re trying to provide guidelines for educators to not solely rely on (AI), he said. “It should be used as a tool, and you should not lose your critical analysis of what it’s producing for you.”

State AI guidelines for teachers

Goyette, the computer science coordinator for the education department, helped create state AI guidelines and speaks to county offices of education for in-person training on AI for educators. She also helped create an online AI training series for educators. She said the most popular online course is about workflow and efficiency, which shows teachers how to automate lesson planning and grading.

“Teachers have an incredibly important and tough job, and what’s most important is that they’re building relationships with their students,” she said. “There’s decades of research that speaks to the power of that, so if they can save time on mundane tasks so that they can spend more time with their students, that’s a win.”

Alex Kotran, chief executive of an education nonprofit that’s supported by Google and OpenAI, said they found that it’s hard to design a language model to predictably match how a teacher grades papers.

He spoke with teachers willing to accept a model that’s accurate 80% of the time in order to reap the reward of time saved, but he thinks it’s probably safe to say that a student or parent would want to make sure an AI model used for grading is even more accurate.

Kotran of the AI Education Project thinks it makes sense for school districts to adopt a policy that says teachers should be wary any time they use AI tools that can have disparate effects on student’s lives.

Even with such a policy, teachers can still fall victim to trusting AI without question. And even if the state kept track of AI used by school districts, there’s still the possibility that teachers will purchase technology for use on their personal computers.

Kotran said he routinely speaks with educators across the U.S. and is not aware of any systematic studies to verify the effectiveness and consistency of AI for grading English papers.

When teachers can’t tell if they’re cheating

Roberts, the Point Loma High School teacher, describes herself as pro technology.

She regularly writes and speaks about AI. Her experiences have led her to the opinion that grading with AI is what’s best for her students, but she didn’t arrive at that conclusion easily.

At first she questioned whether using AI for grading and feedback could hurt her understanding of her students. Today she views using AI like the cross-country coach who rides alongside student athletes in a golf cart, like an aid that helps her assist her students better.

Roberts says the average high school English teacher in her district has roughly 180 students. Grading and feedback can take between five to 10 minutes per assignment she says, so between teaching, meetings, and other duties, it can take two to three weeks to get feedback back into the hands of students unless a teacher decides to give up large chunks of their weekends. With AI, it takes Roberts a day or two.

Ultimately she concluded that “if my students are growing as writers, then I don’t think I’m cheating.” She says AI reduces her fatigue, giving her more time to focus on struggling students and giving them more detailed feedback.

“My job is to make sure you grow, and that you’re a healthy, happy, literate adult by the time you graduate from high school, and I will use any tool that helps me do that, and I’m not going to get hung up on the moral aspects of that,” she said. “My job is not to spend every Saturday reading essays. Way too many English teachers work way too many hours a week because they are grading students the old-fashioned way.”

Roberts also thinks AI might be a less biased grader in some instances than human teachers who can adjust their grading for students sometimes to give them the benefit of the doubt or be punitive if they were particularly annoying in class recently.

She isn’t worried about students cheating with AI, a concern she characterizes as a moral panic. She points to a Stanford University study released last fall which found that students cheated just as much before the advent of ChatGPT as they did a year after the release of the AI.

Goyette said she understands why students question whether some AI use by teachers is like cheating. Education department AI guidelines encourage teachers and students to use the technology more. What’s essential, Goyette said, is that teachers discuss what ethical use of AI looks like in their classroom, and convey that — like using a calculator in math class — using AI is accepted or encouraged for some assignments and not others.

For the last assignment of the year, Robers has one final experiment to run: Edit an essay written entirely by AI. But they must change at least 50% of the text, make it 25% longer, write their own thesis, and add quotes from classroom reading material. The idea, she said, is to prepare them for a future where AI writes the first draft and humans edit the results to fit their needs.

“It used to be you weren’t allowed to bring a calculator into the SATs and now you’re supposed to bring your calculator so things change,” she said. “It’s just moral panic. Things change and people freak out and that’s what’s happening.”

Source