An A.I. Researcher Takes On Election Deepfakes

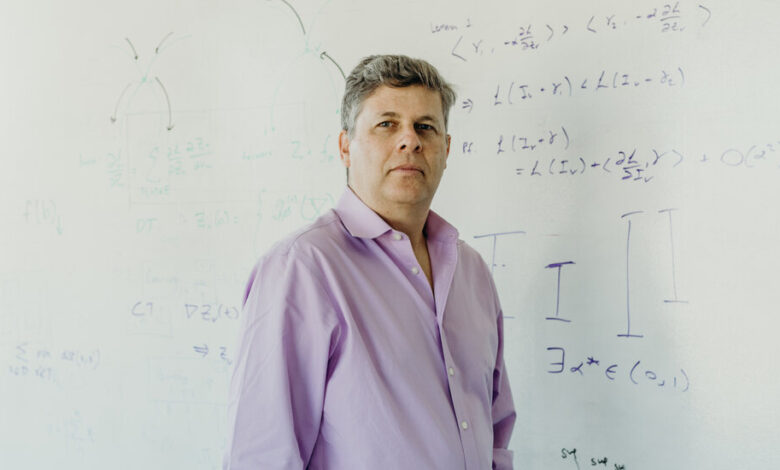

For nearly 30 years, Oren Etzioni was among the most optimistic of artificial intelligence researchers.

But in 2019 Dr. Etzioni, a University of Washington professor and founding chief executive of the Allen Institute for A.I., became one of the first researchers to warn that a new breed of A.I. would accelerate the spread of disinformation online. And by the middle of last year, he said, he was distressed that A.I.-generated deepfakes would swing a major election. He founded a nonprofit, TrueMedia.org in January, hoping to fight that threat.

On Tuesday, the organization released free tools for identifying digital disinformation, with a plan to put them in the hands of journalists, fact checkers and anyone else trying to figure out what is real online.

The tools, available from the TrueMedia.org website to anyone approved by the nonprofit, are designed to detect fake and doctored images, audio and video. They review links to media files and quickly determine whether they should be trusted.

Dr. Etzioni sees these tools as an improvement over the patchwork defense currently being used to detect misleading or deceptive A.I. content. But in a year when billions of people worldwide are set to vote in elections, he continues to paint a bleak picture of what lies ahead.

“I’m terrified,” he said. “There is a very good chance we are going to see a tsunami of misinformation.”

In just the first few months of the year, A.I. technologies helped create fake voice calls from President Biden, fake Taylor Swift images and audio ads, and an entire fake interview that seemed to show a Ukrainian official claiming credit for a terrorist attack in Moscow. Detecting such disinformation is already difficult — and the tech industry continues to release increasingly powerful A.I. systems that will generate increasingly convincing deepfakes and make detection even harder.

Many artificial intelligence researchers warn that the threat is gathering steam. Last month, more than a thousand people — including Dr. Etzioni and several other prominent A.I. researchers — signed an open letter calling for laws that would make the developers and distributors of A.I. audio and visual services liable if their technology was easily used to create harmful deepfakes.

At an event hosted by Columbia University on Thursday, Hillary Clinton, the former secretary of state, interviewed Eric Schmidt, the former chief executive of Google, who warned that videos, even fake ones, could “drive voting behavior, human behavior, moods, everything.”

“I don’t think we’re ready,” Mr. Schmidt said. “This problem is going to get much worse over the next few years. Maybe or maybe not by November, but certainly in the next cycle.”

The tech industry is well aware of the threat. Even as companies race to advance generative A.I. systems, they are scrambling to limit the damage that these technologies can do. Anthropic, Google, Meta and OpenAI have all announced plans to limit or label election-related uses of their artificial intelligence services. In February, 20 tech companies — including Amazon, Microsoft, TikTok and X — signed a voluntary pledge to prevent deceptive A.I. content from disrupting voting.

That could be a challenge. Companies often release their technologies as “open source” software, meaning anyone is free to use and modify them without restriction. Experts say technology used to create deepfakes — the result of enormous investment by many of the world’s largest companies — will always outpace technology designed to detect disinformation.

Last week, during an interview with The New York Times, Dr. Etzioni showed how easy it is to create a deepfake. Using a service from a sister nonprofit, CivAI, which draws on A.I. tools readily available on the internet to demonstrate the dangers of these technologies, he instantly created photos of himself in prison — somewhere he has never been.

“When you see yourself being faked, it is extra scary,” he said.

Later, he generated a deepfake of himself in a hospital bed — the kind of image he thinks could swing an election if it is applied to Mr. Biden or former President Donald J. Trump just before the election.

TrueMedia’s tools are designed to detect forgeries like these. More than a dozen start-ups offer similar technology.

But Dr. Etzioni, while remarking on the effectiveness of his group’s tool, said no detector was perfect because they were driven by probabilities. Deepfake detection services have been fooled into declaring images of kissing robots and giant Neanderthals to be real photographs, raising concerns that such tools could further damage society’s trust in facts and evidence.

When Dr. Etzioni fed TrueMedia’s tools a known deepfake of Mr. Trump sitting on a stoop with a group of young Black men, they labeled it “highly suspicious” — their highest level of confidence. When he uploaded another known deepfake of Mr. Trump with blood on his fingers, they were “uncertain” whether it was real or fake.

“Even using the best tools, you can’t be sure,” he said.

The Federal Communications Commission recently outlawed A.I.-generated robocalls. Some companies, including OpenAI and Meta, are now labeling A.I.-generated images with watermarks. And researchers are exploring additional ways of separating the real from the fake.

The University of Maryland is developing a cryptographic system based on QR codes to authenticate unaltered live recordings. A study released last month asked dozens of adults to breathe, swallow and think while talking so their speech pause patterns could be compared with the rhythms of cloned audio.

But like many other experts, Dr. Etzioni warns that image watermarks are easily removed. And though he has dedicated his career to fighting deepfakes, he acknowledges that detection tools will struggle to surpass new generative A.I. technologies.

Since he created TrueMedia.org, OpenAI has unveiled two new technologies that promise to make his job even harder. One can recreate a person’s voice from a 15-second recording. Another can generate full-motion videos that look like something plucked from a Hollywood movie. OpenAI is not yet sharing these tools with the public, as it works to understand the potential dangers.

(The Times has sued OpenAI and its partner, Microsoft, on claims of copyright infringement involving artificial intelligence systems that generate text.)

Ultimately, Dr. Etzioni said, fighting the problem will require widespread cooperation among government regulators, the companies creating A.I. technologies, and the tech giants that control the web browsers and social media networks where disinformation is spread. He said, though, that the likelihood of that happening before the fall elections was slim.

“We are trying to give people the best technical assessment of what is in front of them,” he said. “They still need to decide if it is real.”