New AI model deliberately corrupts training images to sidestep copyright

A big problem with text-to-image generators is their ability to replicate the original works used to train them, thereby infringing an artist’s copyright. Under US law, if you create an original work and ‘fix’ it in a tangible form, you own its copyright – literally, the right to copy it. A copyrighted image can’t, in most cases, be used without the creator’s authorization.

In May, Google’s parent company, Alphabet, was hit with a class action copyright lawsuit by a group of artists claiming it had used their work without permission to train its AI-powered image generator, Imagen. Stability AI, Midjourney and DeviantArt – all of them use Stability’s Stable Diffusion tool – are facing similar suits.

To avoid this problem, researchers from the University of Texas (UT) at Austin and the University of California (UC), Berkeley, have developed a diffusion-based generative AI framework that is only trained on images that have been corrupted beyond recognition, removing the likelihood that the AI will memorize and replicate an original work.

Diffusion models are advanced machine-learning algorithms that generate high-quality data by progressively adding noise to a dataset and then learning to reverse this process. Recent studies have shown that these models can memorize examples from their training set. This has obvious implications for privacy, security, and copyright. Here’s an example not related to artwork: an AI that needs to be trained on X-ray scans but not memorize images of specific patients, which would breach the patient’s privacy. To avoid this, model makers can introduce image corruption.

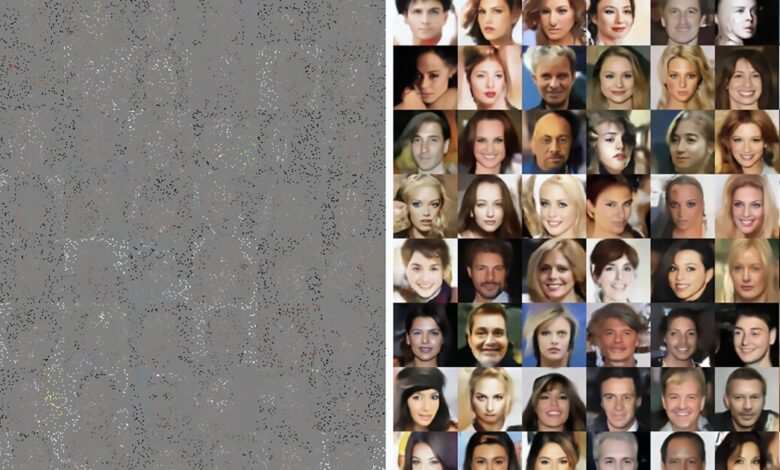

The researchers demonstrated with their Ambient Diffusion framework that a diffusion model can be trained to generate high-quality images only using highly corrupted samples.

Daras et al.

The above image shows the difference in image output when corruption is used. The researchers first trained their model with 3,000 ‘clean’ images from CelebA-HQ, a database of high-quality images of celebrities. It generated images nearly identical to the originals (the left panel) when prompted. Then, they retrained the model using 3,000 highly corrupted images, where up to 90% of individual pixels were randomly masked. While the model generated lifelike human faces, the results were far less similar (right panel).

“The framework could prove useful for scientific and medical applications, too,” said Adam Klivans, a computer science professor at UT Austin and study co-author. “That would be true for basically any research where it is expensive or impossible to have a full set of uncorrupted data, from black hole imaging to certain types of MRI scans.”

As with existing text-to-image generators, the results aren’t perfect every time. The point is that artists can rest a little easier knowing that a model like Ambient Diffusion won’t memorize and replicate their original works. Will it stop other AI models from memorizing their original images and replicating them? No, but that’s what the courts are for.

The researchers have made their code and Ambient Diffusion model open-source to encourage further research. It’s available on GitHub.

The study was published on the pre-print website arXiv.

Source: UT at Austin