This AI Paper from Princeton and the University of Warwick Proposes a Novel Artificial Intelligence Approach to Enhance the Utility of LLMs as Cognitive Models

Scientists studying Large Language Models (LLMs) have found that LLMs perform similarly to humans in cognitive tasks, often making judgments and decisions that deviate from rational norms, such as risk and loss aversion. LLMs also exhibit human-like biases and errors, particularly in probability judgments and arithmetic operations tasks. These similarities suggest the potential for using LLMs as models of human cognition. However, significant challenges remain, including the extensive data LLMs are trained on and the unclear origins of these behavioural similarities.

The suitability of LLMs as models of human cognition is debated due to several issues. LLMs are trained on much larger datasets than humans and may have been exposed to test questions, leading to artificial enhancements in human-like behaviors through value alignment processes. Despite these challenges, fine-tuning LLMs, such as the LLaMA-1-65B model, on human choice datasets has improved accuracy in predicting human behavior. Prior research has also highlighted the importance of synthetic datasets in enhancing LLM capabilities, particularly in problem-solving tasks like arithmetic. Pretraining on such datasets can significantly improve performance in predicting human decisions.

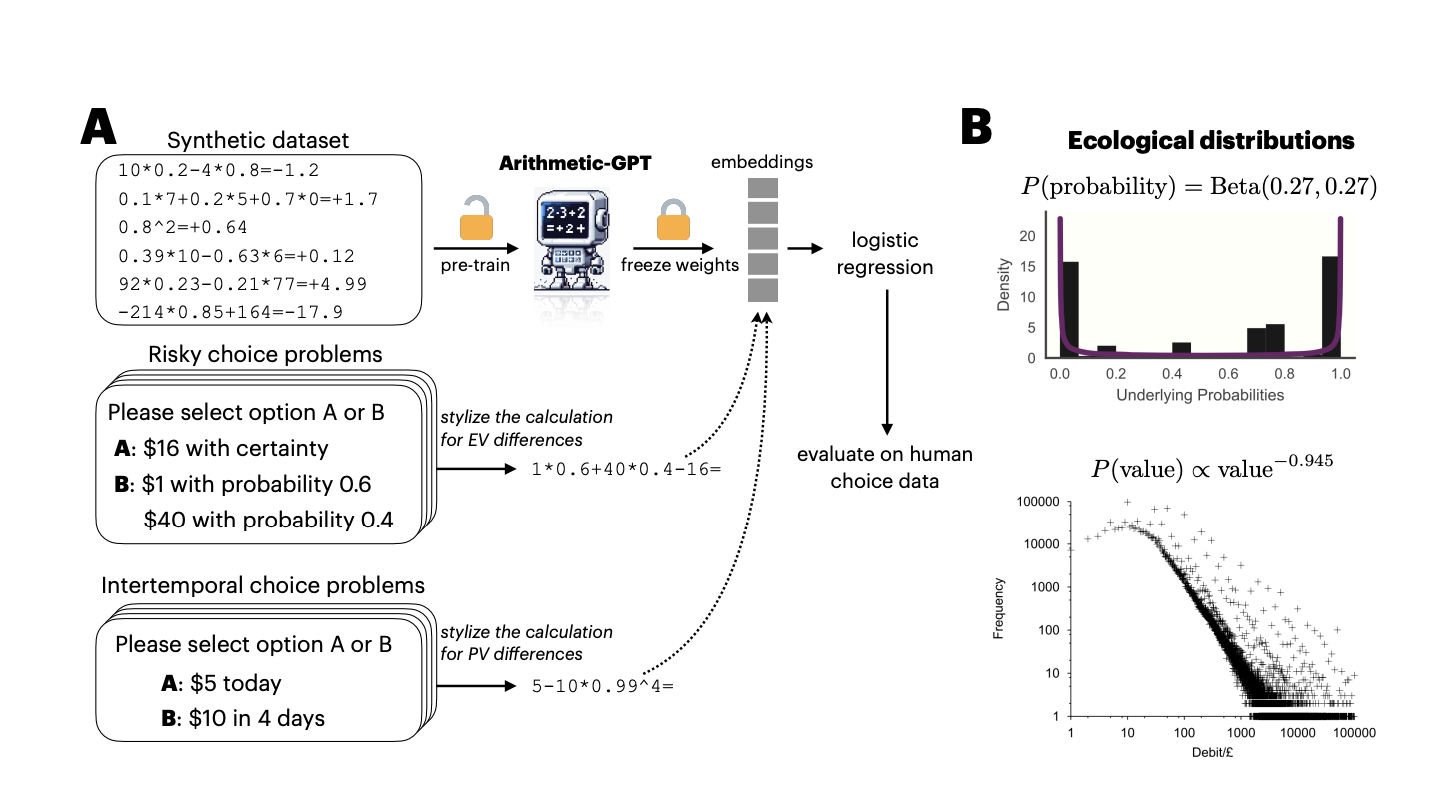

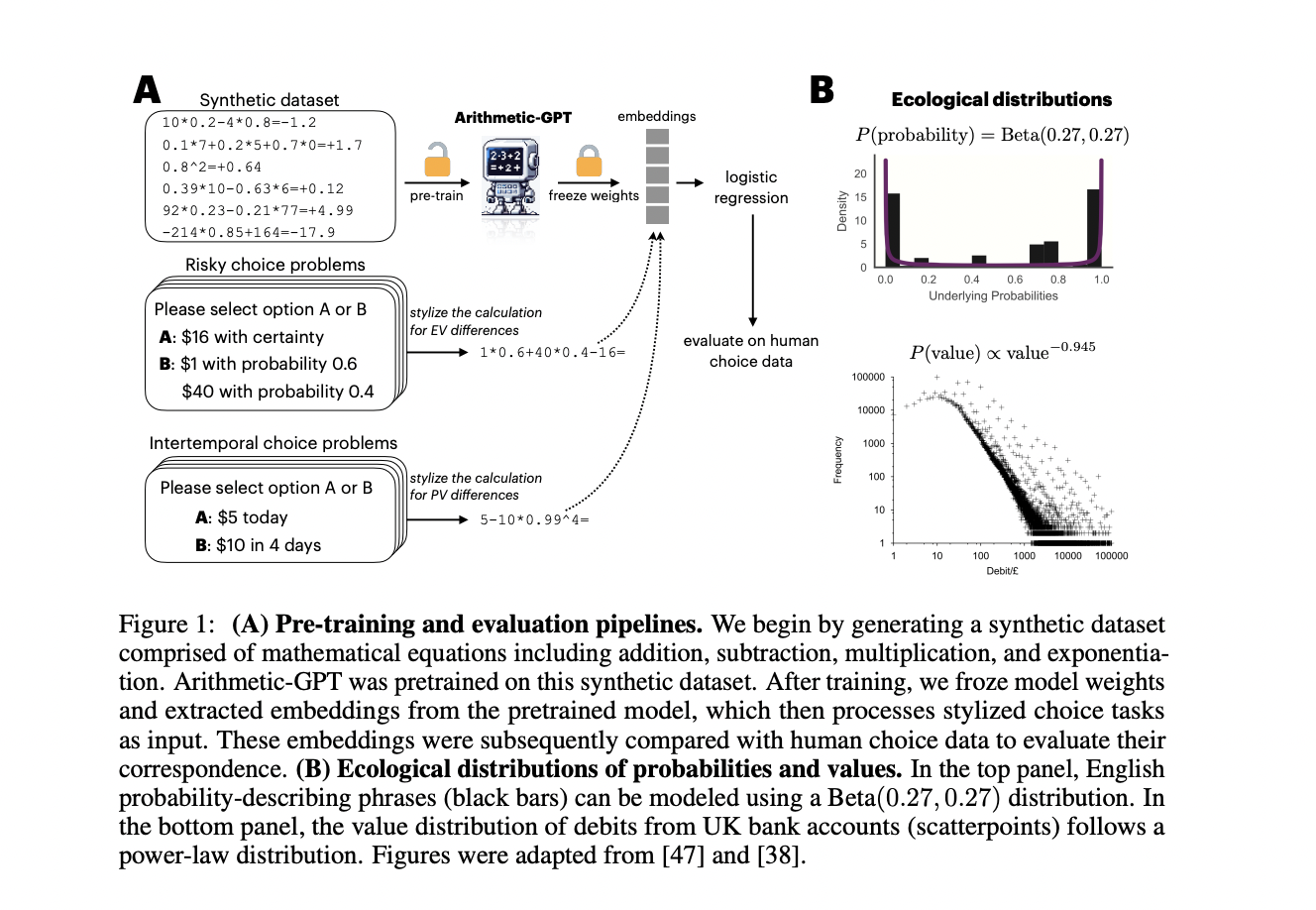

Researchers from Princeton University and Warwick University propose enhancing the utility of LLMs as cognitive models by (i) utilizing computationally equivalent tasks that both LLMs and rational agents must master for cognitive problem-solving and (ii) examining task distributions required for LLMs to exhibit human-like behaviors. Applied to decision-making, specifically risky and intertemporal choice, Arithmetic-GPT, an LLM pretrained on an ecologically valid arithmetic dataset, predicts human behavior better than many traditional cognitive models. This pretraining suffices to align LLMs closely with human decision-making.

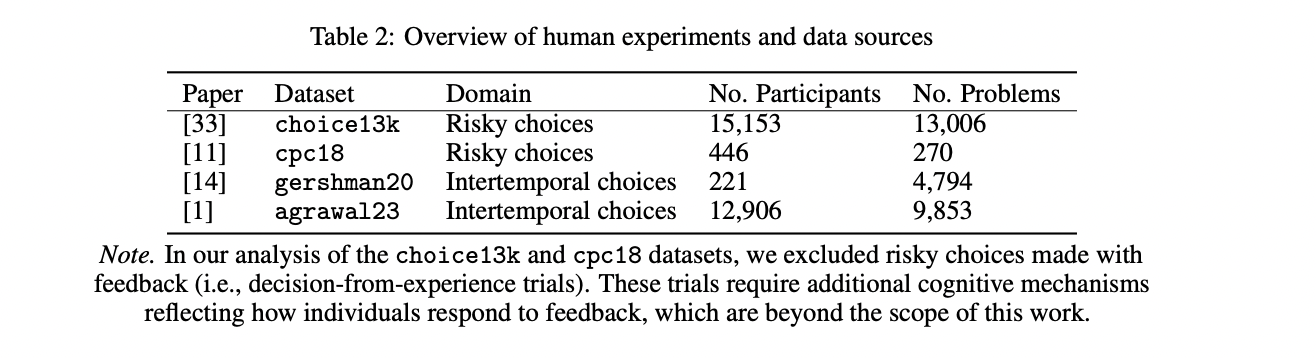

Researchers address challenges in using LLMs as cognitive models by defining a data generation algorithm for creating synthetic datasets and gaining access to neural activation patterns crucial for decision-making. A small LM with a Generative Pretrained Transformer (GPT) architecture, named Arithmetic-GPT, was pretrained on arithmetic tasks. Synthetic datasets reflecting realistic probabilities and values were generated for training. Pretraining details include a context length of 26, batch size of 2048, and a learning rate of 10⁻³. Human decision-making datasets in risky and intertemporal choices were reanalyzed to evaluate the model’s performance.

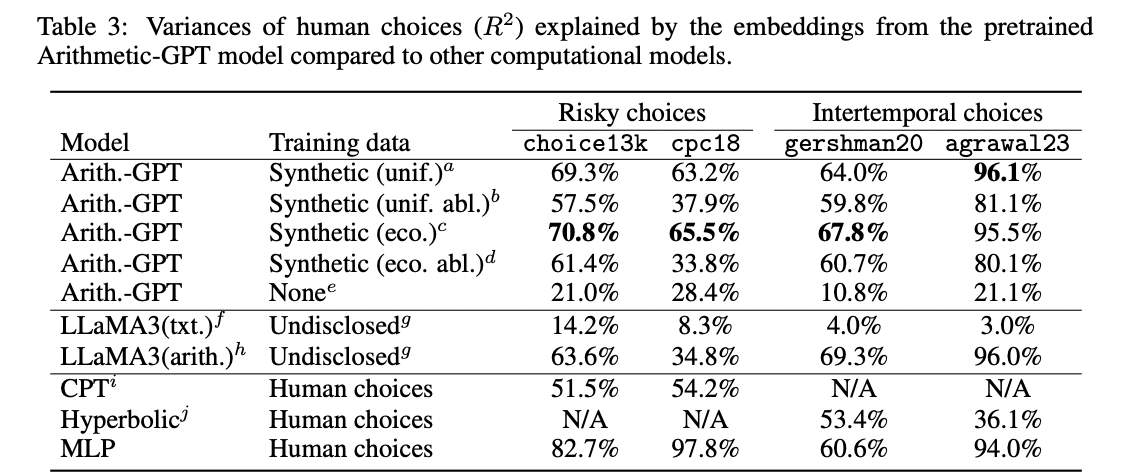

The experimental results show that embeddings from the Arithmetic-GPT model, pretrained on ecologically valid synthetic datasets, most accurately predict human choices in decision-making tasks. Logistic regression using embeddings as independent variables and human choice probabilities as the dependent variable demonstrates higher adjusted R² values compared to other models, including LLaMA-3-70bInstruct. Benchmarks against behavioral models and MLPs reveal that while MLPs generally outperform other models, Arithmetic-GPT embeddings still provide a strong correspondence with human data, particularly in intertemporal choice tasks. Robustness is confirmed with 10-fold cross-validation.

The study concludes that LLMs, specifically Arithmetic-GPT pretrained on ecologically valid synthetic datasets, can closely model human cognitive behaviors in decision-making tasks, outperforming traditional cognitive models and some advanced LLMs like LLaMA-3-70bInstruct. This approach addresses key challenges by using synthetic datasets and neural activation patterns. The findings underscore the potential of LLMs as cognitive models, providing valuable insights for both cognitive science and machine learning, with robustness verified through extensive validation techniques.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 43k+ ML SubReddit | Also, check out our AI Events Platform