Why OpenAI Is Getting Harder to Trust

- OpenAI appointed former NSA Director Paul Nakasone to its board of directors.

- Nakasone’s hiring aims to bolster AI security but raises surveillance concerns.

- The company’s internal safety group has also effectively disbanded.

There are creepy undercover security guards outside its office. It just appointed a former NSA director to its board. And its internal working group meant to promote the safe use of artificial intelligence has effectively disbanded.

OpenAI is feeling a little less open every day.

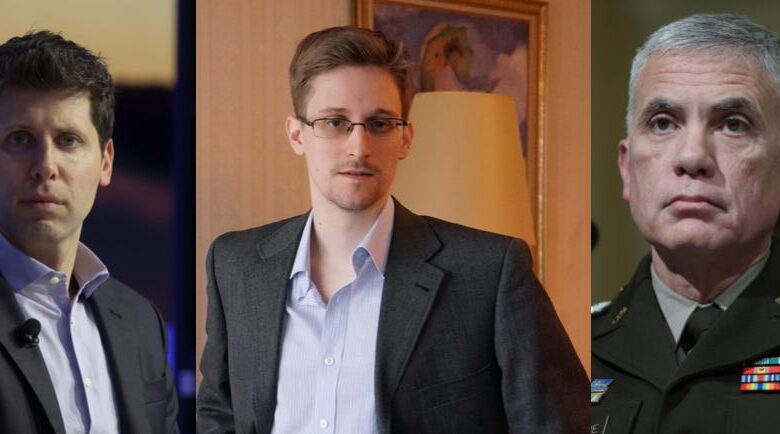

In its latest eyebrow-raising move, the company said Friday it had appointed former NSA Director Paul Nakasone to its board of directors.

In addition to leading the NSA, Nakasone was the head of the US Cyber Command — the cybersecurity division of the Defense Department. OpenAI says Nakasone’s hiring represents its “commitment to safety and security” and emphasizes the importance of cybersecurity as AI continues to evolve.

“OpenAI’s dedication to its mission aligns closely with my own values and experience in public service,” Nakasone said in a statement. “I look forward to contributing to OpenAI’s efforts to ensure artificial general intelligence is safe and beneficial to people around the world.”

But critics worry Nakasone’s hiring might represent something else: surveillance.

Edward Snowden, the US whistleblower who leaked classified documents about surveillance in 2013, said in a post on X that the hiring of Nakasone was a “calculated betrayal to the rights of every person on Earth.”

“They’ve gone full mask-off: do not ever trust OpenAI or its products (ChatGPT etc)” Snowden wrote.

In another comment on X, Snowden said the “intersection of AI with the ocean of mass surveillance data that’s been building up over the past two decades is going to put truly terrible powers in the hands of an unaccountable few.”

Sen. Mark Warner, a Democrat from Virginia and the head of the Senate Intelligence Committee, on the other hand, described Nakasone’s hiring as a “huge get.”

“There’s nobody in the security community, broadly, that’s more respected,” Warner told Axios.

Nakasone’s expertise in security may be needed at OpenAI, where critics have worried that security issues could open it up to attacks.

OpenAI fired former board member Leopold Aschenbrenner in April after he sent a memo detailing a “major security incident.” He described the company’s security as “egregiously insufficient” to protect against theft by foreign actors.

Shortly after, OpenAI’s superalignment team — which was focused on developing AI systems compatible with human interests — abruptly disintegrated after two of the company’s most prominent safety researchers quit.

Jan Leike, one of the departing researchers, said he had been “disagreeing with OpenAI leadership about the company’s core priorities for quite some time.”

Ilya Sutskever, OpenAI’s chief scientist who initially launched the superalignment team, was more reticent about his reasons for leaving. But company insiders said he’d been on shaky ground because of his role in the failed ouster of CEO Sam Altman. Sutskever disapproved of Altman’s aggressive approach to AI development, which fueled their power struggle.

And if all of that wasn’t enough, even locals living and working near OpenAI’s office in San Francisco say the company is starting to creep them out. A cashier at a neighboring pet store told The San Francisco Standard that the office has a “secretive vibe.”

Several workers at neighboring businesses say men resembling undercover security guards stand outside the building but won’t say they work for OpenAI.

“[OpenAI] is not a bad neighbor,” one said. “But they’re secretive.”